The Claude Coding Vibes Are Getting Worse

Generate an SVG of a pelican riding a bicycle prompt.For a while now I've been evaluating Claude Code with my personal projects. [1] See my post on self-hosting models for Claude Code [2]

The public sentiment for Claude Code has grown quite positive recently. Starting with the release of Opus 4.5 in November last year [3] and continuing to grow with the release of Opus 4.6 in February. Then there was OpenAI's Pentagon deal in late February and Anthropic's response in March.

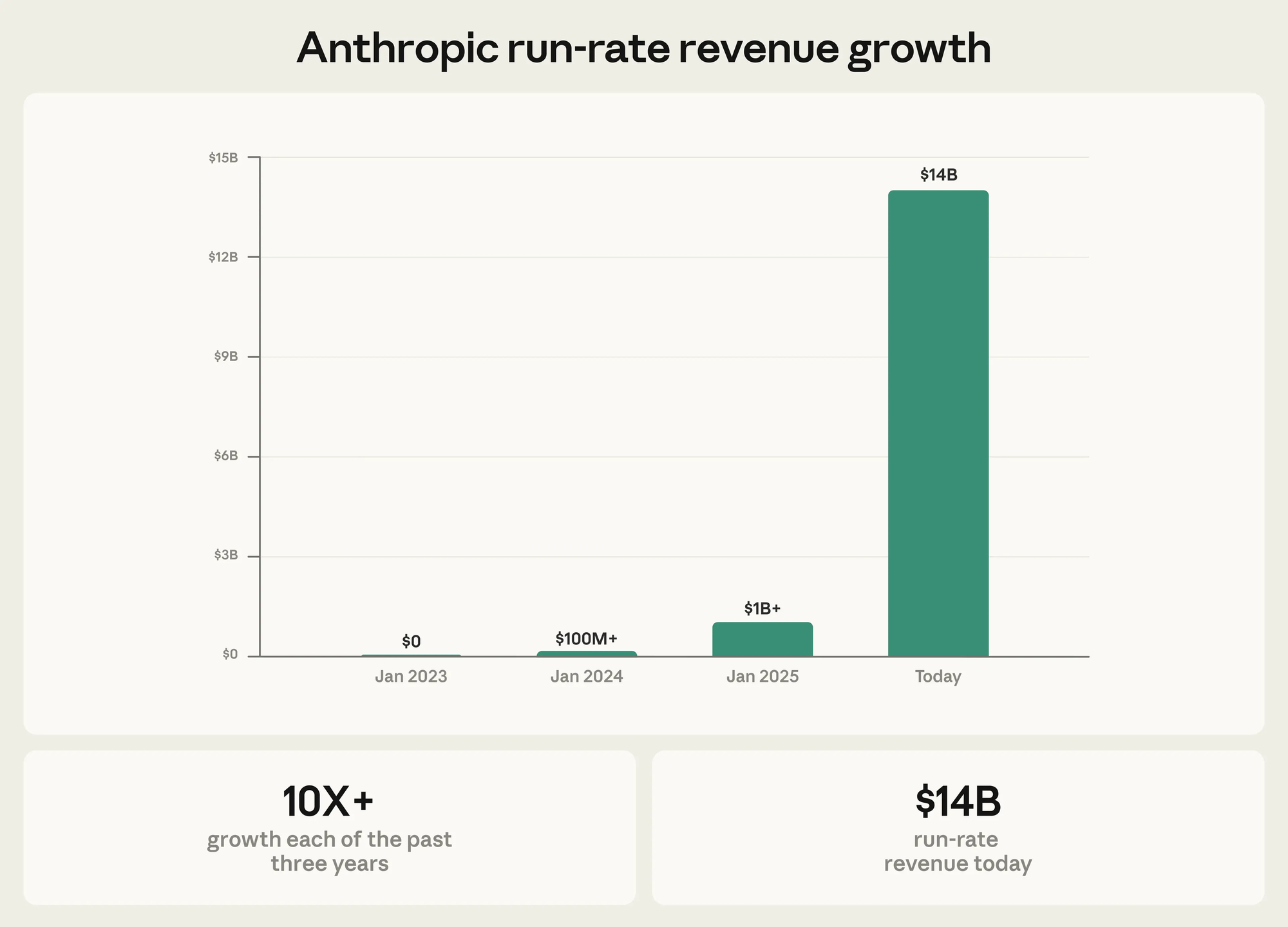

With all of that came a shift in usage to Anthropic which is nicely captured in this revenue chart:

From Anthropic's press release.

So if Anthropic is growing like crazy from positive press, why are people starting to sour now?

That revenue growth is nice, but it also means there was a large increase to usage that Anthropic has to handle. Their capacity can't meet the demand, so they are diminishing the product to keep up. Above all, the way they are handling these changes feels adversarial. Claude Code would get an update, something would feel worse and I'd have to look into what it was and if i could reverse it. [4]

In late March the clear context and execute option in plan mode was removed in Claude Code release 2.1.81 the option showClearContextOnPlanAccept was added and defaulted to false. The justification given by Anthropic was: "This was unshipped because you don't need this anymore with 1M context!". Even with a 1M context the performance degrades as the context percentage goes up and I literally had to stop until I could restore the option. Anthropic eventually relented and changed the back to true.

Then in early April Anthropic stopped allowing 3rd party programs to use tokens from users subscriptions to the Pro and Max plans. Now only credits for API tokens are now allowed. There was no official announcement. Instead, they just updated the terms, update to the usage policy docs. Some staff also confirmed the change on social media.

Also in early April an update changed the cache TTL from 1 hour to 5 minutes. The response said this was a bug, corrected it and noted that it was implemented as a part of "ongoing optimization work - [5] it wasn't a regression, on balance it lowers total cost for users across the request mix."

Finally there is this somewhat vague issue that mostly captures the feeling about how "Claude Code is unusable for complex engineering tasks with the Feb updates". All these issues on the Claude Code project on GitHub were shared around the internet and now are flooded with complaints. They are marked as closed or not planned, but the underlying concerns still remain.

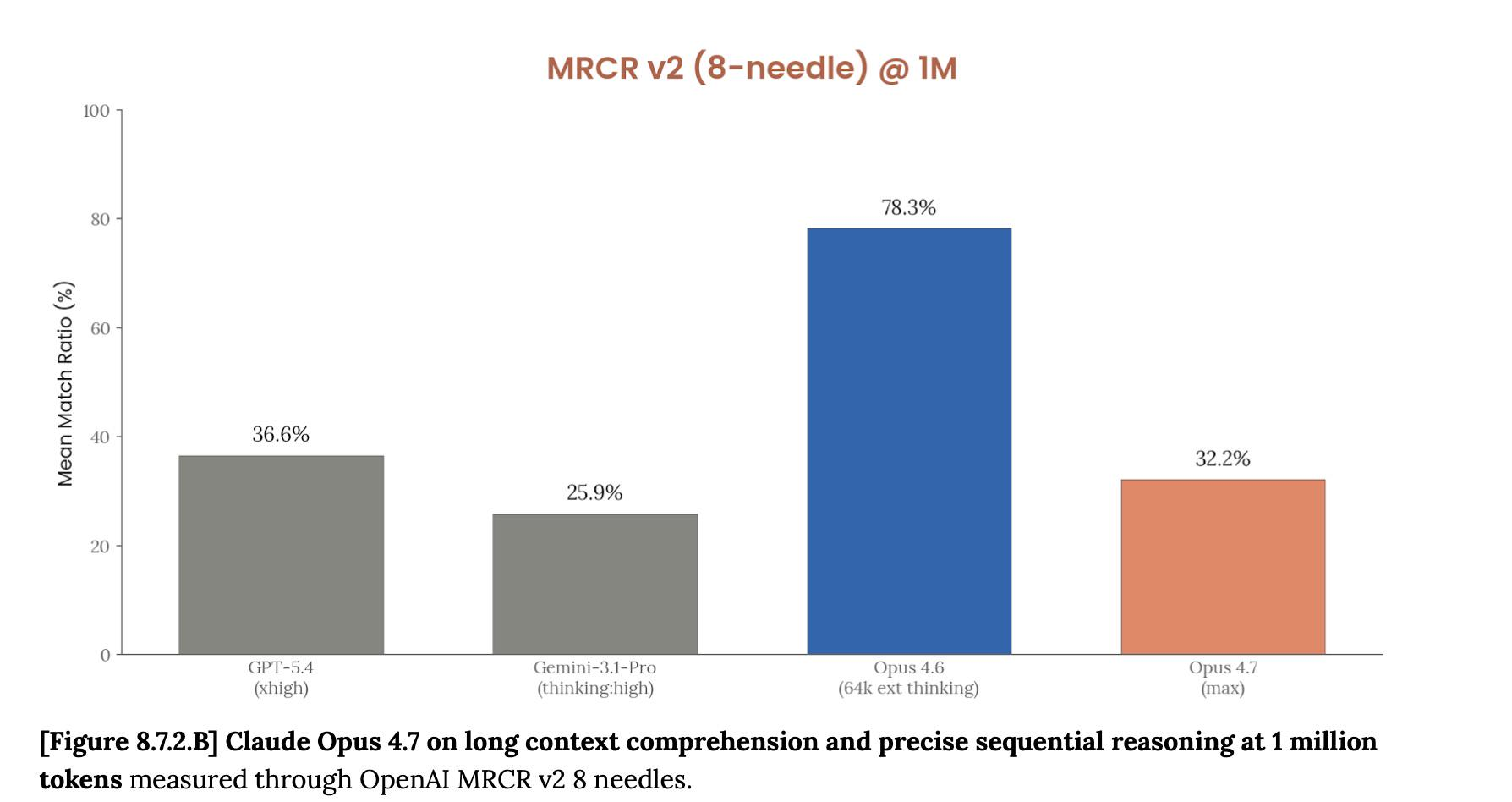

All of this leads up to today when Opus 4.7 dropped and in the release notes was this section on how extended thinking budgets were removed:

Extended thinking budgets are removed in Claude Opus 4.7. Setting

thinking: {"type": "enabled", "budget_tokens": N}will return a 400 error. Adaptive thinking is the only thinking-on mode, and in our internal evaluations it reliably outperforms extended thinking.

Yet another degradation that I cannot remove or reverse.

As for the statement that "our internal evaluations it reliably outperforms extended thinking", this is a graphic from the issue thread: [6]

To be sure, these changes are designed to mitigate the impact of oversubscribing users while Anthropic goes through this growth period. [7] However, the most frustrating part is these changes impact the quality of life for all users of Claude Code. Even when you are paying for tokens through the API.

I know I use the word too much, but this does feel like we are moving through the enshittification curve. Cresting over the "good to their users" phase. So while I am making a reference to Cory, I might as well quote this thought from him:

Doubtless some of you are affronted by my modest use of an LLM. You think that LLMs are "fruits of the poisoned tree" and must be eschewed because they are saturated with the sin of their origins. I think this is a very bad take, the kind of rathole that purity culture always ends up in.

Refusing to use a technology because the people who developed it were indefensible creeps is a self-owning dead-end. You know what's better than refusing to use a technology because you hate its creators? Seizing that technology and making it your own. Don't like the fact that a convicted monopolist has a death-grip on networking? Steal its protocol, release a free software version of it, and leave it in your dust

The AI bubble sucks. AI itself is a normal technology

I can't use Anthropic's models with OpenCode, but I think I will give it a try with other models. [8] Things move so fast, I can't imagine Anthropic will have the lead with their models forever. The open models and harnesses will catch up and when that happens Anthropic can't degrade those.

At my employer we are also really trying to test out the tooling for work tasks to decide if we want to use it more. ↩︎

Though things move fast so this is now out of date. ↩︎

Also with developers taking vacation in December and giving it a try for the first time. ↩︎

Also tucking away links as I fixed things knowing I would inevitably have to write this. ↩︎

This was an em dash, and even through it was a quote, I strive to purge those from my site. ↩︎

And originally from Reddit. ↩︎

Yes, I just finished a class on writing politically engaged essays, so of course I'm going to literally include the "to be sure" paragraph. ↩︎

Member discussion